The file to recognise can be provided both by including the audio signal into the HTTP request payload (encoded with Base64) or by giving the URI of the file (currently, only Google Storage can be used).

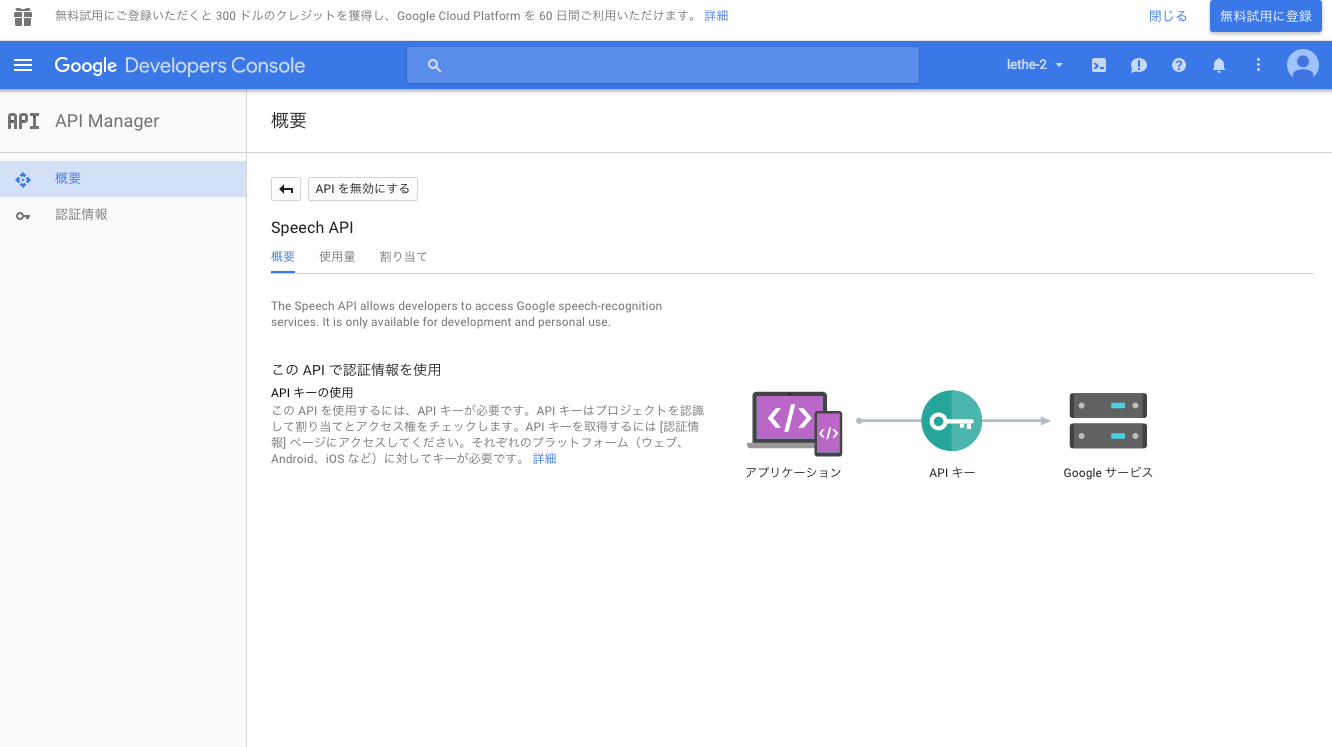

Optionally, it can be requested to return multiple alternatives in addition to the best-matching, each one with the estimated accuracy. The batch processing is very straightforward just by providing the audio file to process and describing its format the API returns the best-matching text, together with the recognition accuracy. The API, still in alpha, exposes a RESTful interface that can be accessed via common POST HTTP requests. An Outline of the Google Cloud Speech API Now that such technology will be accessible as a cloud service to developers, it will allow any application to integrate speech-to-text recognition, representing a valuable alternative to the common Nuance technology (used by Apple’s Siri and Samsung’s S-Voice, for instance) and challenging other solutions such as the IBM Watson speech-to-text and the Microsoft Bing Speech API. Speech-to-text features are used in a multitude of use cases including voice-controlled smart assistants on mobile devices, home automation, audio transcription, and automatic classification of phone calls. The neural network is updated as new speech samples are collected by Google, so that new terms are learned and the recognition accuracy keeps on increasing. The capability to convert voice to text is based on deep neural networks, state-of-the-art machine learning algorithms recently demonstrated to be particularly effective for pattern detection in video and audio signals. This speech recognition technology has been developed and already used by several Google products for some time, such as the Google search engine where there is the option to make voice search. Google recently opened its brand new Cloud Speech API – announced at the NEXT event in San Francisco – for a limited preview. For instance, Wendy’s and Google Cloud are adding AI and voice tools to the restaurant chain’s drive-thrus, so that customer voices will translate into visual text and anything the Wendy’s AI will sound like whatever voice the brand chooses, presumably the restaurant’s fictional namesake.Discover the Strengths and Weaknesses of Google Cloud Speech API in this Special Report by Cloud Academy’s Roberto Turrin DeepMind’s WaveNet technology makes transforming audio into text and back feasible, and it’s for more than just customer service. The tech giant reworked the visual interface for speech-to-text API last month to make it easier for non-technical developers to design and manage the API for their business.

Google regularly upgrades its transaction and speech synthesis services as enterprise conversational AI continues to grow. The website offers guidance on what to submit but won’t approve the voice model until Google confirms the model doesn’t violate Google’s AI Principles and that the person recording the audio knows what their voice will be used for and approves. Interested businesses can submit recordings of the voice they want to use to Google Cloud, and the AI will train a voice model that will then work with the TTS API much as it has with the Google-created options. Our TTS API has included a speech synthesis service with a static list of voices for some time, but now, with Custom Voice, moving beyond these predefined options is easier than ever.” Synthesized Speech “For businesses looking to build a strong brand identity, establishing a unique voice can help turn mobile app interactions or customer service based on interactive voice responses (IVR) into differentiated customer experiences. “It’s important for all companies to build a strong identity and brand association with their conversational AI systems and this starts with the synthetic voice,” Google Cloud speech product manager Calum Barnes explained in a blog post. Google appears to have worked out any technical or procedural issues with the feature The custom voice feature started beta testing a year and a half ago as part of the Google Cloud Contact Center AI release. Now, the clients can have the branded voice as part of the conversational AI platform, saying the lines it chooses without having to have a voice actor or spokesperson record new lines all of the time. But, Google’s library of voice models can’t include an ideal choice for every client wanting a voice identifiable as a kind of audio logo. Synthesizing voices using AI enables businesses to save time and resources on call centers and other interactions with customers. The voice models can be trained on recordings supplied by the client and integrated anywhere the business wants a branded voice AI, such as customer service or phone-based commerce. Google Cloud is giving business clients the option to train and deploy their own custom voices synthesized by its text-to-speech (TTS) API.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed